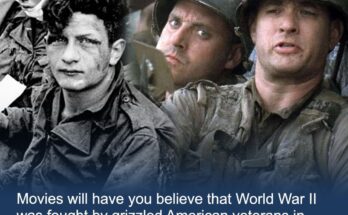

The Dumbest Things Movies Have Us Believe About WWII

If Hollywood has taught the world anything about WWII, it’s that America won it single handedly, and they did so with some serious cinematic flair. But, while it’s somewhat understandable …

The Dumbest Things Movies Have Us Believe About WWII Read More